In this release, moderation and spam have been incorporated into the abuse workflow which is:

Moderated -> Reported -> Appealed -> Reviewed -> Hidden or Removed

Moderation does not have a separated workflow in this release. Moderation is now a stage in the abuse workflow that collects information to decide whether its content should be supposed abusive and then picks up in the cycle where abusive content enters, in the reporting stage.

Abuse

Any comment/reply or content can be flagged as abusive, which then puts it into the abuse workflow. In this workflow, content that is reported but hasn't reached the minimum abuse threshold of reports can be viewed and moderated. Once the number of reports reaches the minimum threshold (which is configurable), the content hits the appeal queue process, where those with appropriate permission (the appeal board) can decide whether to hide the content.

If content is reported enough times or the author's posts are picked up by the abuse filter plugins, the author is notified by email and given a chance to appeal the finding. If the finding goes uncontested or is found to be abusive by the review board, the content is hidden from the community. If enough of the author's content is found to be abusive, the user himself can be found abusive and removed from the site, as well as his content.

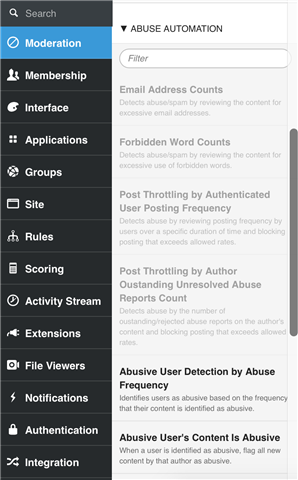

The abuse filter plugins include:

- Email address counts

- Forbidden word counts

- Link counts

- Posting frequency by authenticated users

- Posting frequency by IP address

- Abusive user detection by abuse frequency

- Abusive user's content is abusive

- Akismet

- Authenticated user content posting frequency

- Moderate content by moderated users

These plugins can be found at Pencil Tool > Administration > Moderation Queue > Abuse Automation. Note that greyed-out plugins have been disabled (using a check box on the plugin's Options tab).

Note that most abusive-type plugins have a tab that allows you to exclude specific types of content from review.

In development, software engineers can be added to an application and become part of the platform moderation.

Moderation

Moderation extends to all posts, comments, and content in this release. When a user is moderated, all of his/her content is moderated.

The points of moderation and spam's incorporation into the abuse workflow are:

- Preemptively moderating spam content and comments.

- Detecting spam when the content is posted.

- Reviewing and/or removing abusive content after it has been published.

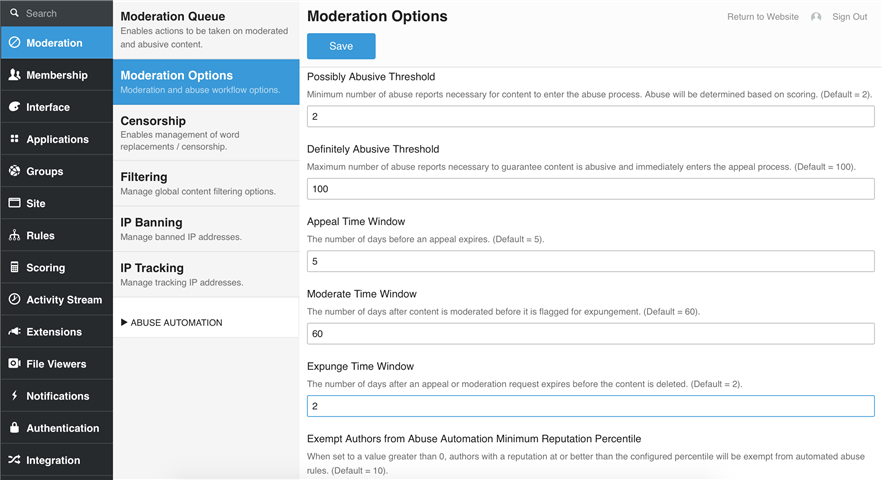

Moderation has configurable options for possibly abusive threshold, definitely abusive threshold, appeal time window, moderate time window, expunge time window, and exempt authors from abuse automation minimum reputation percentile.

Content is moderated through the Abuse Management interface.

When content is in the abuse workflow, users who are trying to access moderated or abusive content can see that the content is under review, but still exists, and the user will see a message that the content is under review. If they navigate to the page where the content is located, they see a message that it is currently unavailable because it is pending review.